This blurb originally appeared in CT No.118: Robot Basquiat + the best email service providers.

Actual content technology made the news! DALL-E 2 is not fintech in disguise, not social media anxiety, and is actually innovative. It draws teddy bears using 80s computers and badly interpolates Basquiat, what is not to love.

Powered by Microsoft-funded OpenAI, DALL-E 2 draws complex images in a variety of styles on command from a well-trained visual neural net and advanced language processing. It can also draw abstract ideas, but since computers don't have ideas of their own, the results are often uncanny or muddy.

On its website, Open AI claims that DALL-E 2 has learned the relationship between text and images in a process called diffusion.

How it works: DALL-E 2 has memorized a large dataset of images and associated descriptive text captions and can generate new images based on prompts from that dataset.

It can also edit or replace parts of images through another process called inpainting, which isn't unlike Photoshop's context-aware fill.

I mean, that's what it says on the website. Only a few select hypebeasts/researchers actually have access to draw with DALL-E 2. Open AI's trial interest form keeps telling me I don't pass the Captcha test, so a trial doesn't look possible for me, a person, probably because all DALL-E's fellow robots got there first.

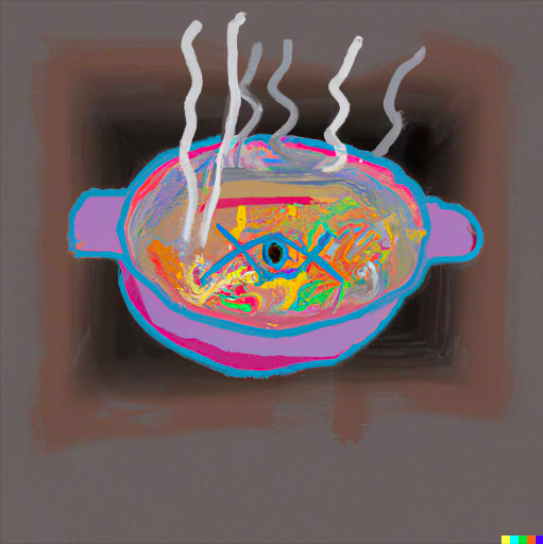

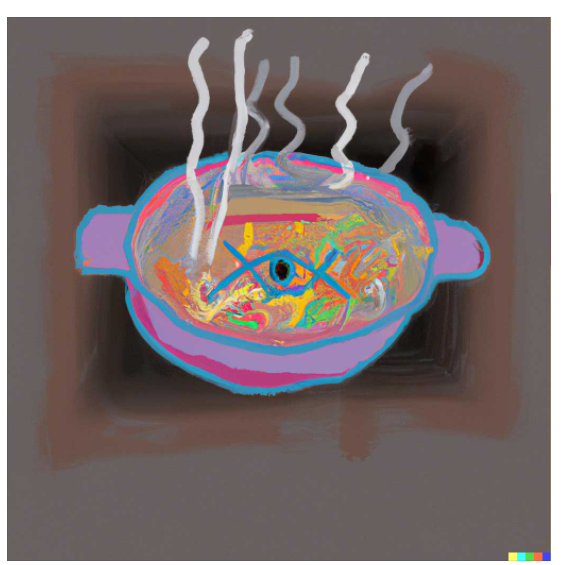

Here's the DALL-E website example for "A bowl of soup that looks like a portal to another dimension in the style of Basquiat":

Tech disruption disciples gush that DALL-E 2 will replace artists and illustrators, as if entering a concrete command into a computer is exactly the same as making art, or even investing in custom content. Why the first goal of disruption is replacing any person, let alone artists (who don't make any money anyway), should be deeply interrogated.

I'm genuinely impressed by DALL-E 2's speed and flexibility, but like most current AI, it's a cute toy that replicates the biases of digital culture, just in new combinations. Most of its creations look pulled from a fan art archive or a stock photo library, probably because those were its training data. The "wow tech is neat" part of me loves it; the aesthete wishes that the folks singing the limited demo's praises took the time to understand more real-world art.

With this model and its accompanying hype I can see a future for content and UX designers: convincing executives and clients that yes, they can go with the computer-generated images, but just because a computer drew it doesn't mean it will serve a unique brand identity or attract an audience.

However, considering the vast amount of corporate stock photos that I saw on websites for competitive client research recently, maybe we could use some uncanny valley DALL-E 2 images to shake things up.

Hand-picked related content