This essay originally was published on December 8, 2022, with the email subject line "CT No. 145: Let the bots reignite your snobbery."

If you’ve been paying attention to all the machine-generated content that’s flooding the internet this year, you might be concerned with the state of digital content industries, wondering if you will have a job in a few years. If this sounds like you, follow this advice:

Never work for someone who would replace you with a robot if they had the chance.

Yes, it’s challenging to follow. If you work for any company with a CFO, chances are high they would replace you with a robot immediately when given the opportunity. But in general, if you have the privilege of being selective about your employer, make sure at least the CEO values your humanity on a fundamental level, and bring that humanity to work.

Because here’s the thing about what’s now being called AIG (AI-generated) content*: The companies who would use machine-generated content for copywriting or illustration were never going to pay you a living wage anyway. They’re happy they no longer have to go on Fiverr and search for the cheapest possible human illustrator or copywriter, someone whose work they’re using to fill in the web templates with content. Business leaders who think AI will solve their problems swear that as long as they have the content in place, the business will do just as well.

They’re wrong, of course. But the problem is that businesses are inundated with bad copy and bad internet content: most of what they read on internet forums is bad copy and pretty much every other B2B website, white paper and blog post they read has subpar copy.

Bad copy abounds because:

- Most online business writing is bad on every level, from idea to sentence

- because many tech businesses have not valued good writing

- because simply being in the digital marketplace and using ad tech at a basic level has, for a long time, meant that anyone could build a fast-growing business where they didn’t have to care about content quality

Business owners who don’t care about content quality also don’t believe in building brand equity, understanding context or valuing originality; they just want something in place so they can go back to executing on their ad-based business model.

But we’re at the point with technology adoption where simply buying ad impressions doesn’t reap the easy benefits it used to. In the past five years, most businesses and marketers have realized that purchased visibility doesn’t scale without a strong brand, quality content, and stellar digital customer experiences.

Luckily for us, the leader of the tech pack seems to have thrown down some sort of gauntlet.

*I hate this term and much prefer “machine-generated content” or “natural language generation," which is what we were calling the tech before the most recent hype cycle.

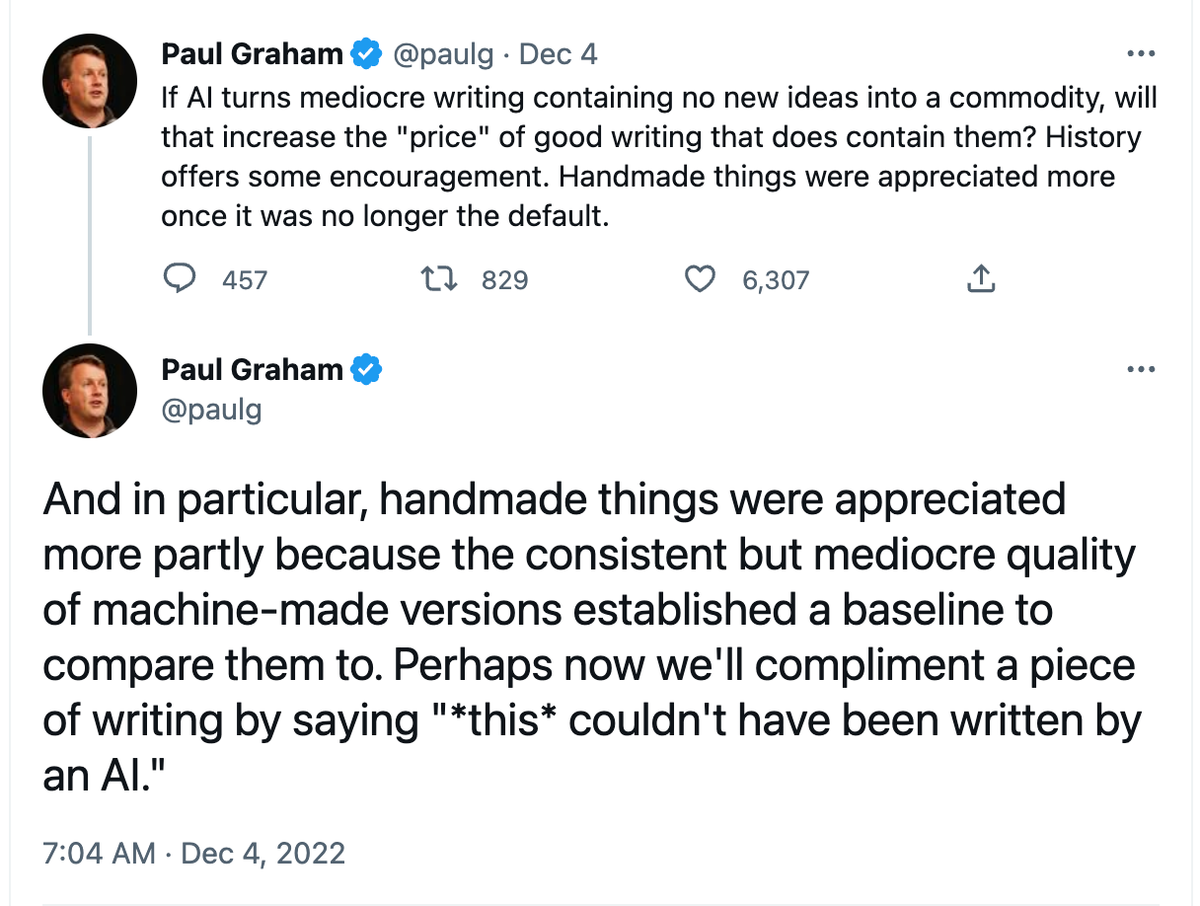

The billionaire said it: Paul Graham recognizes that quality content and craft are important

If you’re unfamiliar, billionaire Paul Graham is a founder of YCombinator, the tech accelerator responsible for many of your favorite online businesses, as well as the ones you hate. He is an venture capitalist writes meandering blog posts with marginal value other than that he is a very rich man, and people listen to whatever he says in hopes they, too, will become rich.

And this week, to my shock and horror, I agreed with him.

What-ho, Paul! I’m happy to chat with you about your misperception of the effects of industrialization on artists, but, putting that aside, are you talking about how you value writing craft, the process of shaping meaning through considered language? A word and concept that’s been cast aside by the technology industry for decades in favor of logic, speed and efficiency?

Perhaps now we’ll compliment a piece of writing indeed.

In the above tweet, Graham is writing about ChatGPT, a new natural language generation (NLG) product from OpenAI (his wife is an investor, and he’s cozy with CEO Sam Altman). If you aren’t familiar with ChatGPT, just look it up on literally any online platform with a search function and you’ll see plenty of examples from the past week. If you want a more in-depth explainer, here’s NYT’s Kevin Roose in typically breathless style, embedding tweets instead of conducting original reporting (and quoting zero women, which tends to happen in tech coverage).

ChatGPT responds to open-ended text queries with paragraphs of text-based answers. It can create script-like dialog, write code, and provide somewhat factual answers for questions, as long as they are not about individual people or events after 2021.

ChatGPT is imperfect for many reasons: it spits out wrong answers, useless equivocations, and empty words in the thousands. Even Ben Thompson points out that it’s completely wrong.

But the speed at which it creates sensible paragraphs and essays, its responsiveness to open-ended queries, and its scale are impressive—at least from a tech perspective. It rarely gets the grammar wrong, which is a tricky thing to teach a computer. It knows the names of characters in How I Met Your Mother, which I definitely don’t. Yes, it is just copying patterns, and it is a “stochastic parrot” like all NLG tools based on large language models (LLM). But its call and response function works impressively.

Predictably though, like many of the tech folks who think it’s the best toy since the last best toy, ChatGPT is a horrible writer. Its sentences are long and awkward; you wish they’d programmed some Hemingway App into the thing. Its vocabulary is limited. The words it spits out are often technically correct, but soulless and devoid of meaning.

It’s not just a bad writer because it’s a bot. Compared with other natural language generation tools that aren’t freely available to the public, ChatGPT is bad at writing. Its vocabulary is limited and its grasp of syntax is minimal. It can construct a variety of sentences in a paragraph, but doesn’t understand that paragraphs should differ.

And if you enjoy writing at all, ChatGPT’s output is exceptionally dull, the complete opposite of the engagement-optimized, ire-filled social content that drew many users to digital channels in the first place. Other machine-powered text generators can do much better, from a stylistic standpoint.

However:

While it deeply pains me to admit that I think a venture capitalist billionaire is correct, I agree with Paul Graham here. Because when it comes to most business writing, the marketing blog posts you read, the SaaS websites, the white papers and research, everything they trained the Large Language Model (LLM) on—most of the writing is bad.

And because it’s bad, tools like ChatGPT are not taking jobs, or at least not good ones. While a full-time job as a Fiverr writer might not be in the cards for that much longer, editorial roles and careers that straddle tech and content (like yours, if you’re here) will grow. Audiences aren’t stupid, particularly those who regularly read online. They like to read interesting words, unique combinations of sentences, and valuable ideas.

What ChatGPT does do well is provide examples of bad writing, ad nauseam: How empty ideas read; how hype without critique multiplies exponentially until the point of boredom.

But I certainly can’t churn out reasonable, sensical copy that fast, thoughtful or rote. For a business seeking copy, if they want speed and nothing else, ChatGPT is a better choice.

But on the whole, from a content perspective, I believe that ChatGPT will raise the bar and create higher standards for digital work.

Building new models focused on craft and quality

Ten years ago, if you had told me the future of digital content was scores of people screenshotting badly written chat copy and posting the screenshots of the writing on a decimated Twitter, I would have thought more critically about changing career directions to focus on digital greenfields. With ChatGPT, as with much mass-produced digital content, the copy is bad, and the rhetoric through posting is worse. It’s not worth arguing with those folks, or pointing out what good writing looks like. In the search for the best toy that would prevent them from having to talk with other humans, they weren’t going to read good writing anyway.

But ten years ago, I also didn’t understand that we are at the very beginning of a long journey—that after the technology is introduced, there’s a long period of fine-tuning, experimentation, and growth. Not the kind of experimentation and growth that marketers talk about, with a veneer of science painted on top, but creative experimentation and slow, intentional organic growth, like the film and magazine industries in the 1920s and ’30s, or television in the ’90s and ’00s. Instead of thinking that we’re at some death of a golden era, what if we saw ourselves as using the old garbage for compost and building better systems that serve an artistic culture, rather than the slipshod oopsies of feeds from the last decade?

The demand and potential for more editorial roles and new digital content businesses

The Believer and its beautiful website are returning. The Rumpus is still here, publishing new writing daily. New writing projects have proliferated on Substack and Medium, which aren’t necessarily making new writers a lot of money, but certainly giving them a taste for digital publishing. Readers are once again somewhat excited about the internet. Folks are building gorgeous magazine-style websites in Webflow and Ghost. Search demand for “good content” is at an all-time high. Sites like The Kool-Aid Factory and MakerPad have developed high-quality resources to help community-focused publishing businesses grow.

Artists, writers, creators are making brilliant pieces of content and systems with digital tools, and it’s spilling over to general audiences, businesses, the normies who don’t spend their whole days trapped in feeds. There’s a lot of garbage to sort through, and new skills to learn. But from my view, compared with the last 25 years of online publishing, there’s never been a better time for writers and editors communicating directly with an audience, building a library, and staking your claim.

On the traditionally boring B2B publishing side, where I make my living, there’s a high demand for good writing and high quality content. Real talk: I have a client who is so stoked about the English majors their sales-first company just hired to work on editorial and training AI systems, he’s been mentioning them on every call, like, “We have English majors now!” Because companies are learning that they make more money when they have higher quality content, on their websites, in their products, in their experience.

You can view it cynically because yes, it’s capitalism (that’s not going away). If you publish independently, you can say “they’ll just scrape my content and use it to train a bot.” Probably! But all the bot can do with your content is glean your patterns and syntax, picking up your embarrassing verbal crutches rather than your original thoughts, the ideas that a computer still cannot generate on its own.

Lest you get precious about the marginal face value of your intellectual property in a large language model, remember that your alternative in previous eras was to be a Jack Kirby: an artist who created for hire, whose creations ostensibly trained the bot that is the Marvel Cinematic Universe. Kirby never got the credit or the financial rewards for the content machines he created either.

The mass communications systems developed in the twentieth century were no more rewarding to artists and creators than the current wave of short-lived tech content experiments. Corporate media consolidation kills creativity much faster than any bot.

But with the direct-to-reader tools available today, it’s possible to build a long-lasting publishing business that serves an audience that wants to read online. The demand is there; legacy publishers just have no idea how to monetize it. They don’t think creatively or understand the technology, and they rely heavily on outdated assumptions about media production and audience behavior. And the tech guys, the ones who can build the new frameworks? They still have no idea what good content looks like.

So if Paul Graham is challenging people to write better than ChatGPT and build better businesses with better content, I’m going to take him up on it. Because once you start seeing great content, it’s hard to return to the garbage.

Hand-picked related content