AI & Algorithms

Latest stories

71 posts

Perception, intention, and poetic license: What is the attention mechanism that powers LLMs?

How does AI make sense of language?

Disambiguation, sliding doors, hallucinations, and madeleines: How transformers process, clarify, and produce language

Tokens and vector embeddings: The first steps in calculating semantics for LLMs

When words and math collide: Old-school tried and true language processing algorithms

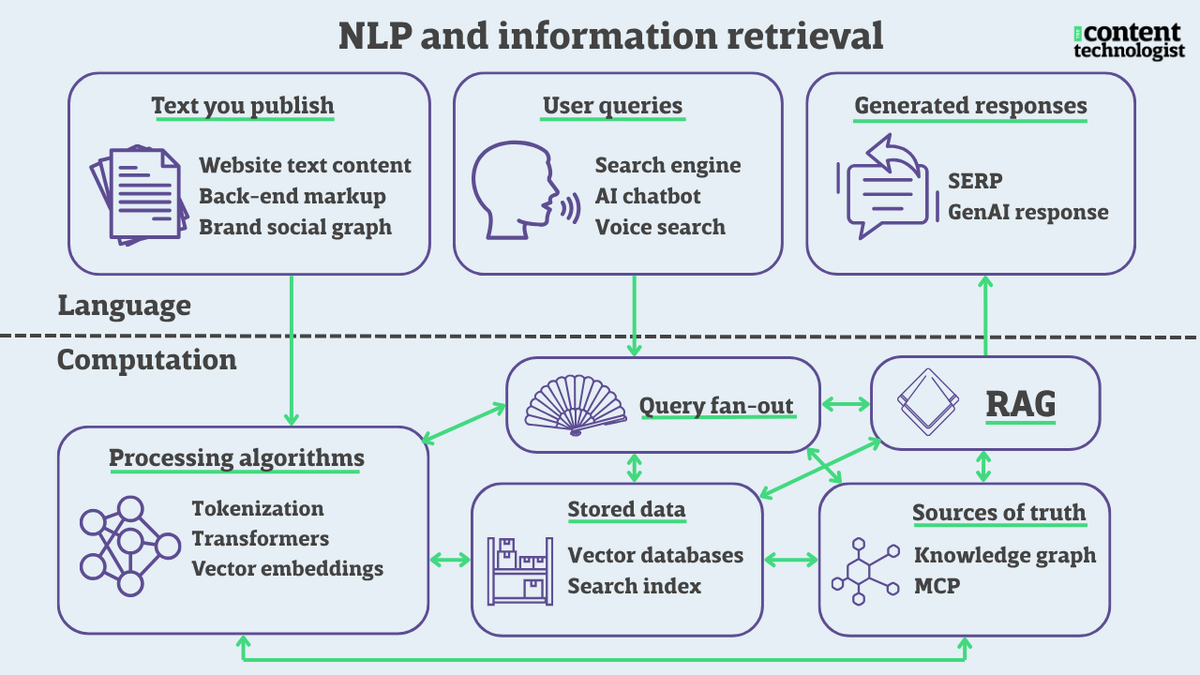

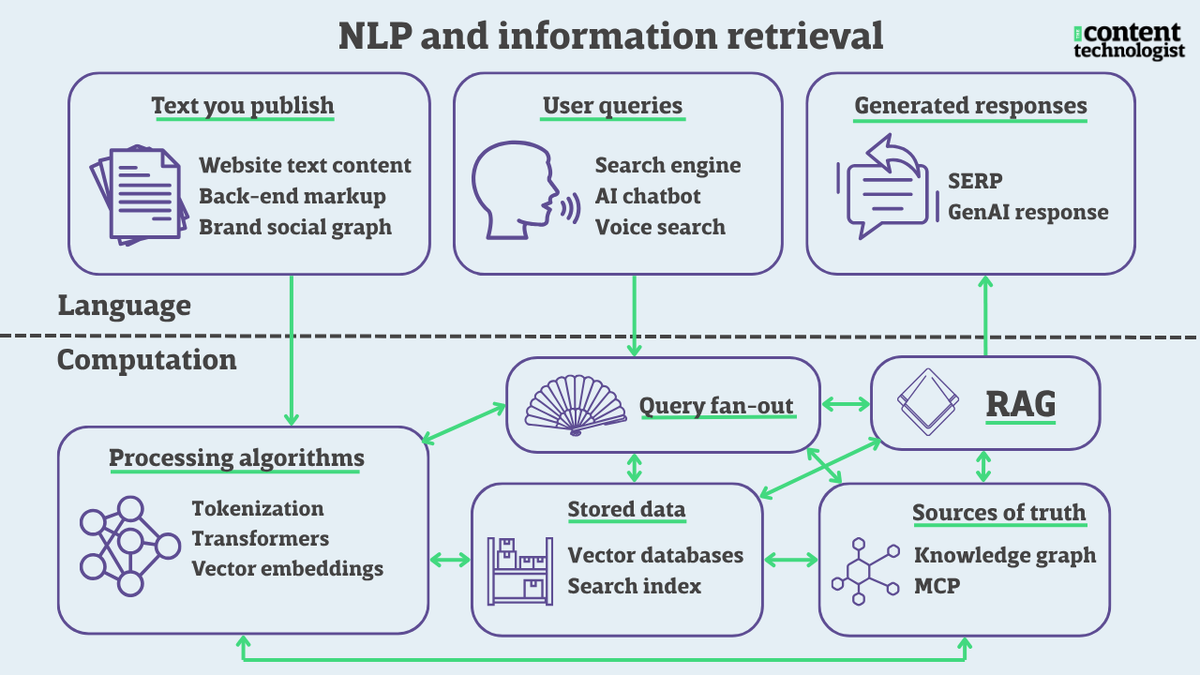

The front door to discovery: How natural language processing is the key to visibility in LLMs